Why AI Is Pushing Hardware to Its Limits

The Role of Nanotechnology in Modern Semiconductor Scaling

Nanoscale Transistors and Emerging Architectures

Advanced Materials and Neuromorphic Concepts

Overcoming Memory and Interconnect Bottlenecks

Thermal Management at Extreme Power Densities

Barriers to Scaling and Manufacturing Constraints

Conclusion and Outlook

References

Nanotechnology is central to the AI hardware race because it offers new ways to reduce heat, improve memory performance, and extend semiconductor scaling as conventional chip design approaches its limits.

Image Credit: yucelyilmaz/Shutterstock.com

Image Credit: yucelyilmaz/Shutterstock.com

As AI models grow larger and more computationally demanding - and as more people use them - progress depends not just on better algorithms, but on hardware that can move data faster, more efficiently, and manage extreme power densities.

Saving this article for later? Download a PDF here.

Artificial intelligence (AI) has transformed tasks that once required large chunks of time and resources into near-instantaneous operations. Modern AI systems can generate complex code, analyze massive datasets in real time, and enable scientific breakthroughs such as protein structure prediction.1

This progress is tightly coupled with advances in semiconductor technology, particularly high-performance hardware from NVIDIA and lithography innovations from ASML, which continue to drive the scaling of AI models.

However, sustaining this growth is increasingly constrained by fundamental hardware limitations. Heat generation in densely packed processors and large-scale data centers is pushing cooling systems to their limits, while memory speed and bandwidth are not keeping pace with computational throughput.2 Simultaneously, nanoscale fabrication faces challenges such as defects, variability, and increased resistance in interconnects.

Why AI Is Pushing Hardware to Its Limits

The pressure AI workloads exert on modern hardware stems from a fundamental shift in scaling.

Historically, performance improvements followed Moore’s Law, where transistor miniaturization yielded predictable gains in speed and efficiency. AI progress today is increasingly governed by scaling laws, in which performance improves with increases in model size, training data, and computational intensity.3

This shift has created a structural imbalance between processing capability and data delivery.

The von Neumann architecture is central to this imbalance, which physically separates computation and memory. This separation requires continuous data transfer between the processor and memory, introducing latency and significant energy overhead.

While processors such as GPUs have scaled rapidly, memory bandwidth has lagged behind, leading to underutilized computing units waiting for data, a limitation widely known as the memory wall. In large-scale AI training, a substantial portion of total energy consumption is devoted to data movement rather than computation.

This challenge is further amplified by the exponential growth of global data generation, which is approaching zettabyte-scale annually. High-resolution video, sensor networks, and multimodal AI datasets demand extreme bandwidth and storage performance, making efficient data movement a central challenge in next-generation computing systems.

The Role of Nanotechnology in Modern Semiconductor Scaling

As device dimensions approach atomic scales, classical scaling laws are breaking down. One fundamental limitation is the Boltzmann limit, which sets a lower bound on the subthreshold swing (~60 mV/decade at room temperature). This restricts further reductions in operating voltage and directly impacts energy efficiency.

To overcome this limitation we will require alternative device concepts that exploit quantum-mechanical effects, such as tunneling, to enable sharper switching at lower power.

Nanoscale Transistors and Emerging Architectures

Addressing these constraints, the semiconductor industry is transitioning beyond FinFETs toward gate-all-around (GAA) transistor architectures, which provide improved electrostatic control.

Intel’s RibbonFET technology, introduced at the 18A node, exemplifies this transition, alongside innovations such as backside power delivery that enhance performance per watt.4

Similarly, TSMC’s N2 node and Samsung’s GAA implementations reflect industry-wide adoption of these advanced architectures.5

Experimental device concepts extend these limits beyond silicon-based scaling. Vertical nanowire transistors based on materials such as indium arsenide offer high electron mobility at reduced voltages. Tunnel field-effect transistors (TFETs), which rely on quantum tunneling rather than classical charge transport, present another promising pathway toward ultra-low-power switching in future AI hardware.6

Advanced Materials and Neuromorphic Concepts

Material innovation is enabling entirely new computing paradigms. 2D materials, such as MoS2 and graphene, allow precise control of electron transport at atomic thicknesses. Concurrently, neuromorphic computing systems based on memristive behavior are gaining traction.

In such systems, ion movement mimics synaptic activity, enabling learning through conductance modulation. Research prototypes from IBM and HP Labs demonstrate that these devices can significantly reduce the need for data transfers between memory and processing units, addressing a key inefficiency in conventional architectures.

Overcoming Memory and Interconnect Bottlenecks

Mitigating the memory wall requires minimizing data movement. High-bandwidth memory (HBM), closely integrated with accelerators such as NVIDIA’s H100, places memory in close proximity to computing units, dramatically increasing throughput.

In parallel, optical interconnects are being explored to transmit data using photons rather than electrons, significantly reducing latency and energy consumption. Backside power delivery further enhances efficiency by separating power distribution from signal routing within the chip.7

Thermal Management at Extreme Power Densities

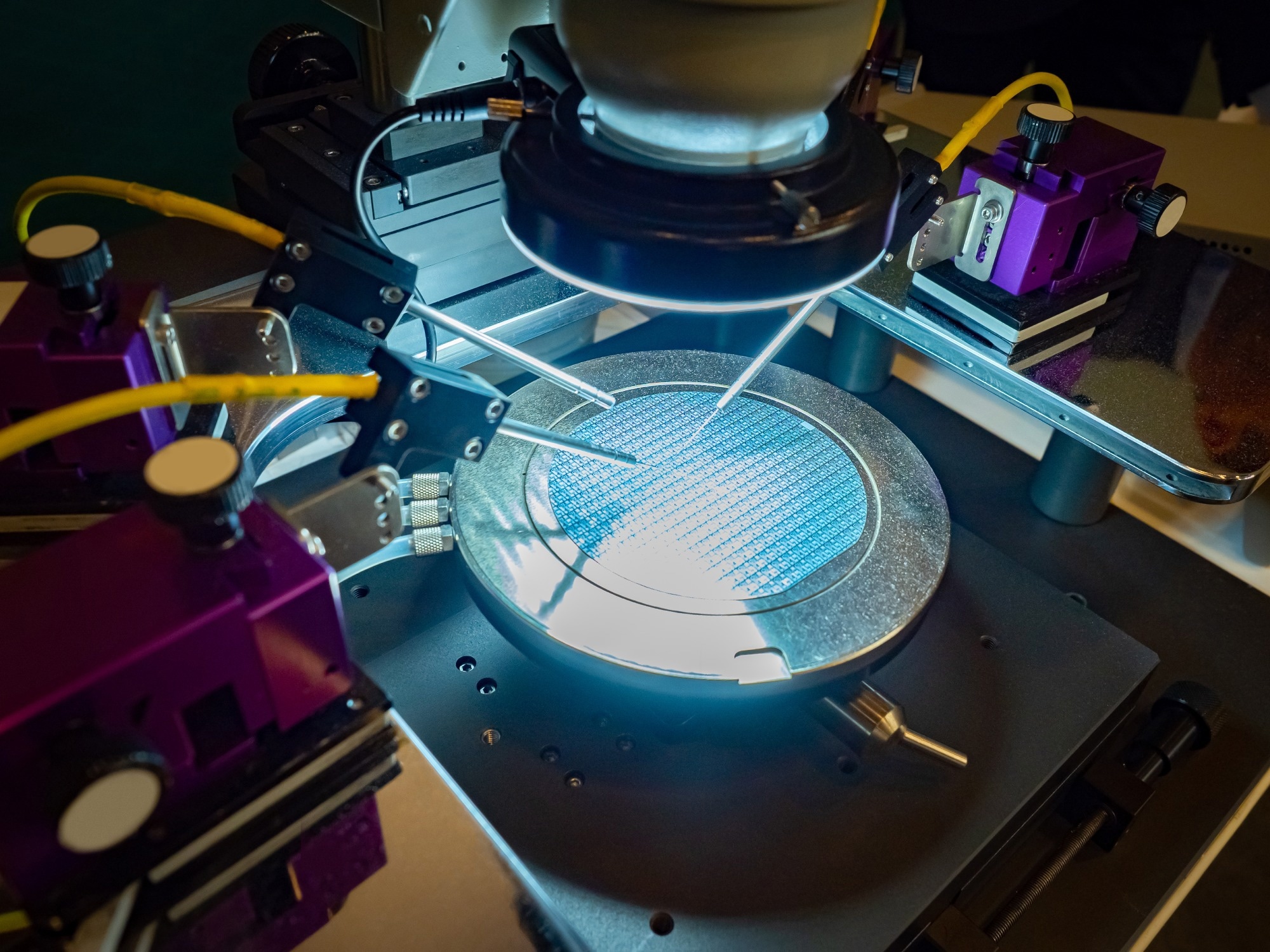

Image Credit: FOTOGRIN/Shutterstock.com

Image Credit: FOTOGRIN/Shutterstock.com

Thermal constraints are becoming critical as power densities increase, particularly in 3D integrated circuits where localized hotspots emerge. Even modest temperature increases can significantly degrade device reliability and lifespan. Advanced cooling strategies are being developed to meet this requirement.

Microfluidic cooling systems enable liquid flow through microscopic channels within chips, directly removing heat from active regions. Additionally, high-thermal-conductivity materials such as graphene and synthetic diamond are being investigated to improve heat dissipation in high-performance AI processors.8

Barriers to Scaling and Manufacturing Constraints

Despite rapid technological advancements, semiconductor scaling is increasingly limited by economic and supply chain constraints. Advanced fabrication facilities require enormous capital investment, and ASML's extreme ultraviolet (EUV) lithography systems can cost hundreds of millions of euros per unit.

On top of this, global semiconductor manufacturing is concentrated among a small number of companies, including TSMC and Samsung, creating geopolitical dependencies.9

Material supply introduces additional risks. Critical elements such as Gallium, Germanium, Tungsten, and Cobalt are essential for advanced semiconductor devices, yet their production is geographically concentrated. Recent export restrictions on Gallium have demonstrated how material supply constraints can directly affect semiconductor manufacturing, underscoring the need for resilient, diversified supply chains.

Conclusion and Outlook

The future of AI hardware is shaped by a convergence of nanotechnology, materials science, and advanced engineering. From billion-transistor GPUs to quantum-enabled nanoscale devices, its progress increasingly depends on rethinking the fundamental principles that have defined computation to date.10

Looking ahead, major breakthroughs are likely to arise from integrating novel materials, architectures, and memory paradigms into unified computing systems. As AI inevitably continues to scale, the ability to engineer devices at the atomic level will be a decisive factor in determining future computational performance.

References

- Secundo G, Spilotro C, Gast J, Corvello V. The transformative power of artificial intelligence within innovation ecosystems: a review and a conceptual framework. Review of Managerial Science. 2025;19(9):2697-728. DOI: 10.1007/s11846-024-00828-z

- Katari M, Jeyaraman J, Mohamed IA, Thirunavukkarasu K. Addressing power and thermal challenges in advanced packaging for AI CPUs/GPUs: Insights into multi-die stacking technology. International Journal for Multidisciplinary Research. 2023;5(6):1-8. DOI: https://doi.org/10.36948/ijfmr.2023.v05i06.17017

- Blog N. How Scaling Laws Drive Smarter, More Powerful AI. 2025.

- Intel. Intel 18A Process Technology Simply Explained. 2025.

- Patterson A. TSMC to Lead Rivals at 2-nm Node, Analysts Say. 2026.

- NVIDIA. 3 Ways NVIDIA Is Powering the Industrial Revolution. 2025.

- Ojika D, Patel B, Reina GA, Boyer T, Martin C, Shah P. Addressing the memory bottleneck in AI model training. arXiv preprint arXiv:200308732. 2020.

- Guo F, Suo Z-J, Xi X, Bi Y, Li T, Wang C, et al. Recent developments in thermal management of 3d ics: A review. IEEE Access. 2025. DOI: 10.1109/ACCESS.2025.3569879

- Deloitte. 2026 Global Hardware and Consumer Tech Industry Outlook. 2026.

- Mammadov E, Asgarov A, Mammadova A. The Role of Artificial Intelligence in Modern Computer Architecture: From Algorithms to Hardware Optimization. Porta Universorum. 2025;1(2):65-71. DOI:10.69760/portuni.010208

Disclaimer: The views expressed here are those of the author expressed in their private capacity and do not necessarily represent the views of AZoM.com Limited T/A AZoNetwork the owner and operator of this website. This disclaimer forms part of the Terms and conditions of use of this website.